Knock AI

AI-powered lead intelligence for B2B sales teams.

Timeline

Dec 2024 — Early 2025

Platform

Web App

My Role

UI/UX Designer

Overview

Knock AI is a B2B sales engagement platform that enriches leads with intent signals and routes high-value buyers to the right rep in real time - cutting through the noise so sales teams only spend time on prospects who are ready to talk.

I joined as the sole product designer, building the platform from scratch over the course of a year - no design team, no design system, and only two early customers to learn from. Every decision was grounded in competitive analysis, direct customer feedback, and weekly ship cycles.

Who we're designing for

Our users fell into two distinct groups — and they needed very different things from the same product:

Experienced SDRs

Know exactly which routing rule to use. Just want to create the link and go. Extra steps slow them down.

Junior SDRs

Unsure which routing rule fits. Don't fully understand what happens after creating a link. Need guidance without feeling lost.

The Challenge

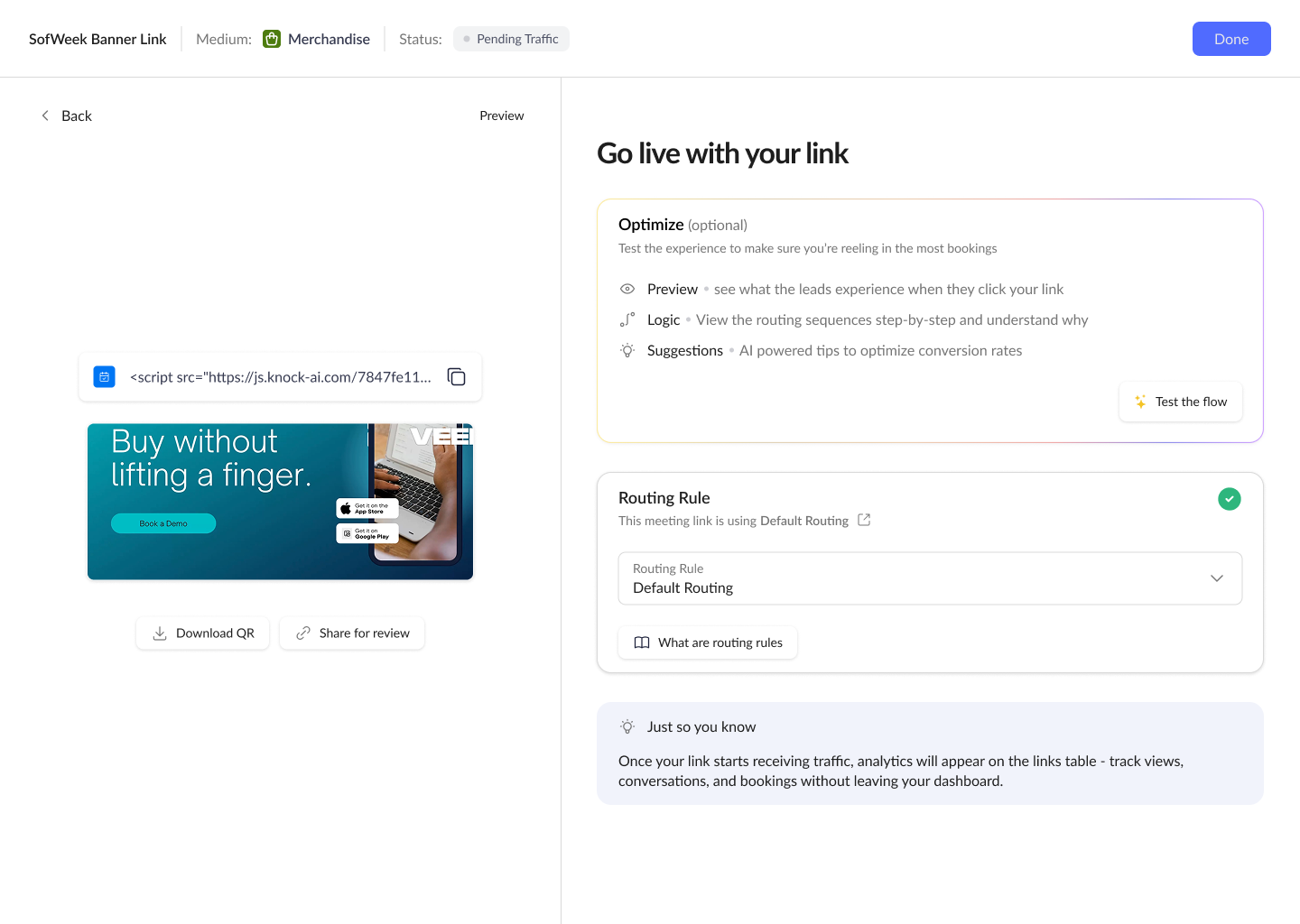

Meeting links are the primary touchpoint between a sales team and their leads - every link generates a chat or booking page tied to a routing rule that determines which rep handles the conversation. But we were seeing no analytics coming in on the backend for created links. Our two customers also told us directly that their links weren't bringing in as many leads as expected - so they kept creating more, hoping quantity would compensate for quality. The result was a high volume of generic, underperforming links instead of a few well-configured ones. Why was this happening?

The original post-creation screen

Finding the root cause

Before jumping to solutions, I needed to understand where the breakdown was actually happening. Customer feedback pointed to the problem, but not the cause. I evaluated several testing methods to find one that would give us the qualitative depth we needed with only two customers to work with.

Moderated usability testing

Primary methodThe highest-signal option. Our two customers gave us access to real reps who had already created links - we could observe the exact moment they stalled and probe why.

Unmoderated remote testing

ConsideredTools like Maze would scale better, but generic panels don’t include B2B sales reps. Without a moderator, we’d miss the critical “why” behind each hesitation.

Focus groups

Ruled outGroup dynamics risk the loudest voice shaping the narrative. We needed individual behavioral evidence, not group opinions.

How we conducted the sessions

We ran moderated usability sessions with 6 SDRs - a mix of senior and junior reps from our two customers. Each session was designed around core UX research principles:

Think-aloud protocol

Participants narrated their thinking as they worked - exposing what they expected, where they hesitated, and what confused them.

Task-based scenarios

Tasks were framed as goals, not instructions - “get this link ready for your team to use” instead of “click the activate button.”

Neutral probing

No leading questions. “What was going through your mind?” instead of “did you find that confusing?”

Mitigating observer effect

We framed each session as “testing the product, not you” - encouraging honest responses over polite completion.

What we discovered

The post-creation screen was a dead-end - no deployment guidance, no clear next step after saving.

Junior SDRs specifically stalled after save. Because the link setup was swift and always assigned the default routing rule, they were unsure if they had skipped a step or done something wrong.

Reps who did activate links received no visibility into performance afterward. They got some booking requests - but fewer than expected - which left them questioning whether their setup was configured correctly.

The feature existed, but it wasn't delivering value. Iterating on the post-creation flow became the top priority.

End State Iteration

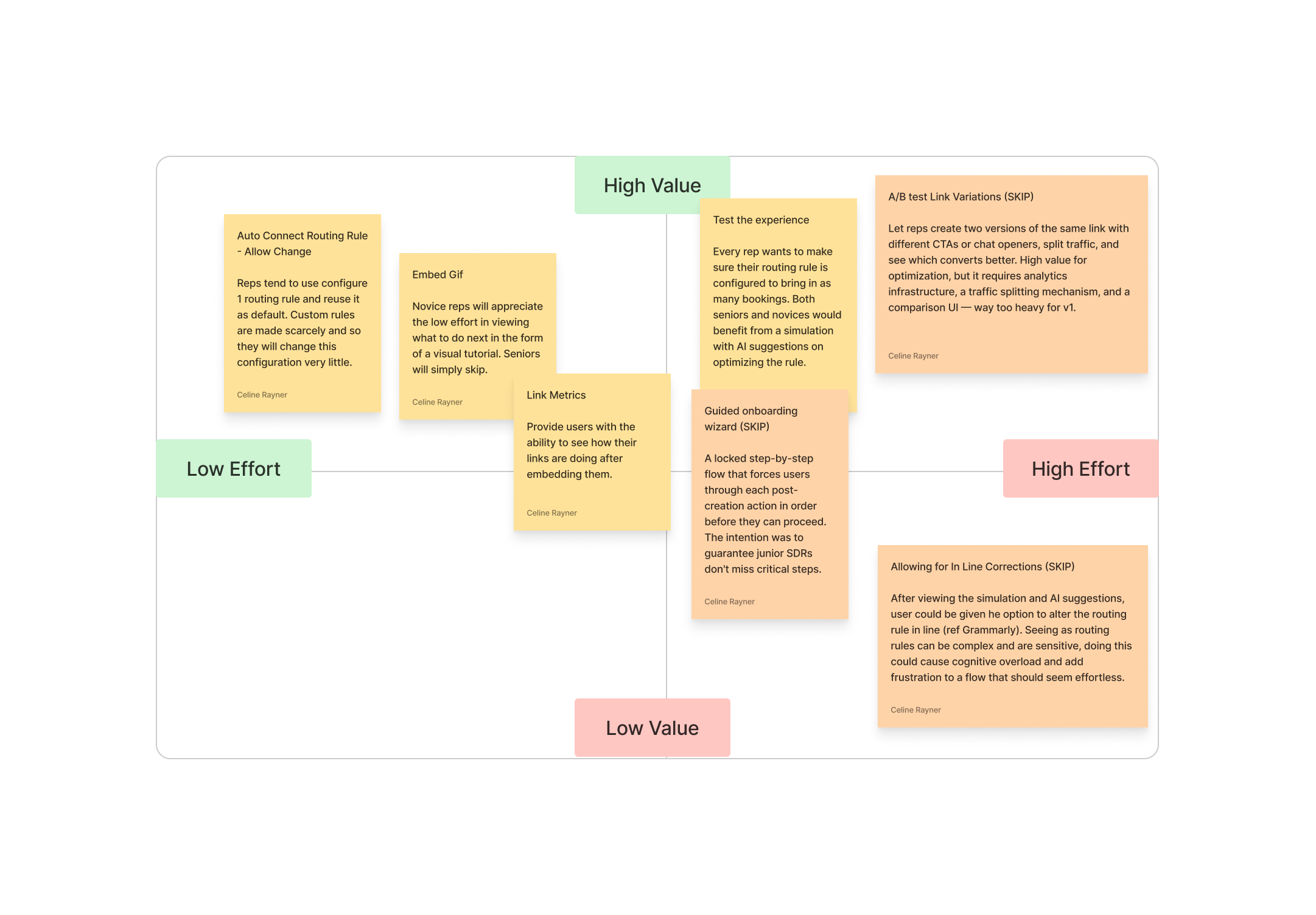

Three critical decision points shaped the redesigned post-creation experience:

Decision 01

Decision Matrix

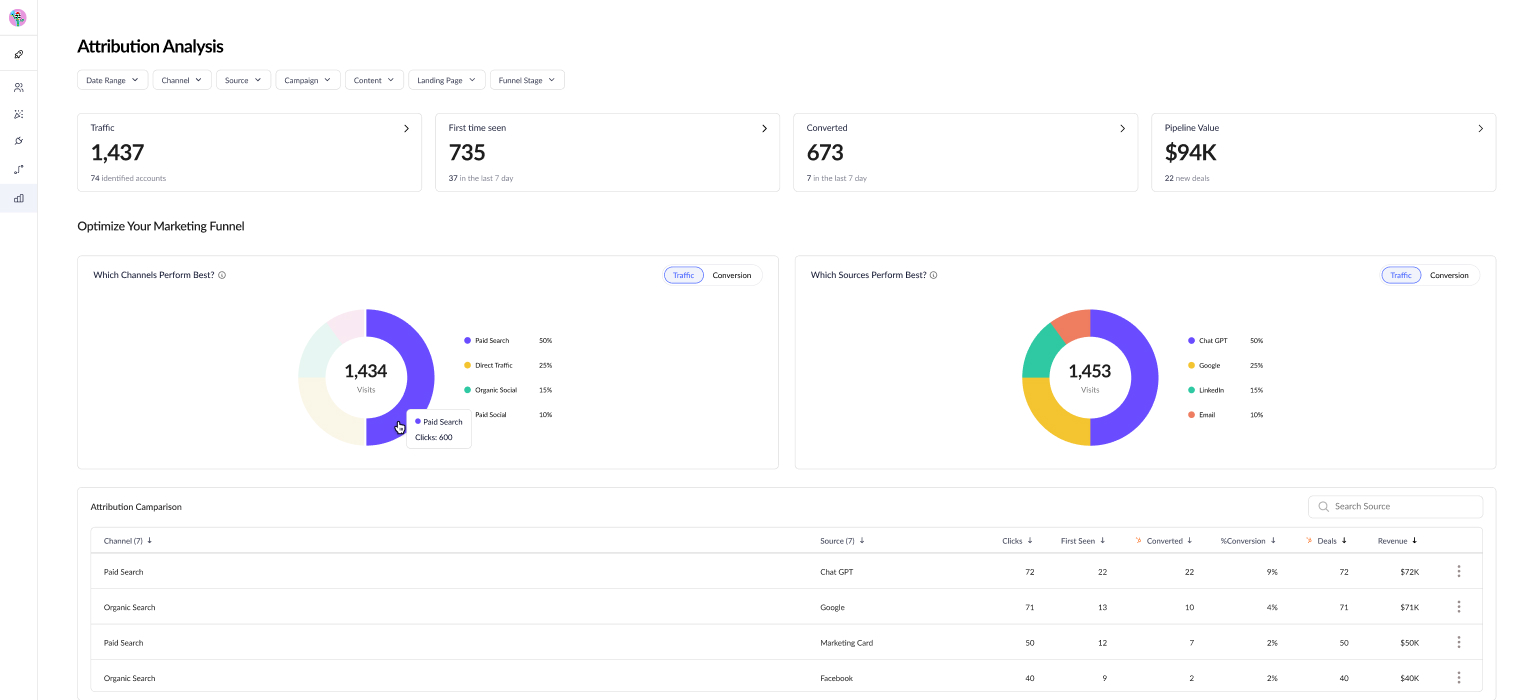

After gathering all the feedback from the usability sessions, I needed to decide which features to incorporate into the redesigned flow. I mapped every potential post-creation action onto a value vs. effort matrix, weighing both development and design effort against our time constraints. Four features landed in the high-value quadrant - everything else was deprioritized or saved for a future iteration.

Decision 02

How other tools handle post-creation

With no direct user access, competitive analysis was my primary research tool. I audited how Calendly, HubSpot, Drift, and Intercom handle post-creation flows. Most either dead-end after save or bury next steps in settings. Intercom came closest with inline setup, but none offered routing transparency or AI-powered optimization. These gaps shaped what we built.

Competitive analysis grid

Decision 03

Presenting the next steps

In the old design, the QR code sat center stage — but during testing, no one used it. The most prominent element on the page was providing zero value. The redesign rethinks the entire hierarchy using a split-screen layout that serves both personas without slowing either one down.

Link + deployment guide promoted to center stage. The embed script and a looping gif showing how to use it now anchor the left panel — directly answering “what do I do with this?” for junior reps.

Optimization flow given priority on the right. The simulator - Knock's strongest differentiator - is surfaced prominently rather than hidden behind a collapsible card. Both personas benefit: juniors learn how their setup works, seniors use it to fine-tune performance.

Routing rules made transparent. The assigned rule is always visible with the option to change it or open it in a new tab. No more under-the-hood actions - users see exactly what's driving their link's behavior.

Analytics tip closes the loop. A subtle prompt tells users where to find link performance data on the main table after embedding - so they know the story doesn't end at deployment.

The Outcome and Impact

The routing system ships as two connected flows — routing rules and meeting links — that work independently but guide users naturally between them.

57%

Faster rule creation

4m 12s down to 1m 48s

94%

Task completion rate

Up from 67%

91%

Link activation rate

Up from 72%

How we measured

Creation time was benchmarked through internal dogfooding sessions with 8 participants from the sales and CS teams, timed before and after the redesign. Completion and activation rates were tracked via Mixpanel event funnels — completion measured users who initiated and successfully saved a routing rule in the same session, while activation tracked newly created meeting links connected to a routing rule within their first session.

More from Knock AI

The routing system was the core challenge, but I designed across the full platform. Here are a few other areas I contributed to:

Design System

Design System

Built a component library from scratch — buttons, inputs, modals, cards — to keep the UI consistent across weekly feature releases.

Chat Links

Chat Links

Embeddable links that reps plug into their websites, emails, and social channels so leads can start a conversation on any platform.

Settings

Settings

A workspace configuration page for managers to handle team setup, integrations, permissions, and billing.

What I Took Away

Designing without direct user access forced me to be resourceful — competitive audits, forum analysis, and CRM benchmarking became my primary research tools. It also made me a stronger communicator: every design decision needed a clear rationale, because I couldn't point to a usability test and say “users struggled here.”

If I could do it again, I'd push harder for even lightweight user validation earlier — guerrilla testing, internal dogfooding, anything to close the feedback loop faster. The work shipped and the system holds together, but some decisions were educated guesses that I'd rather have validated.